The Case for Precision Healthcare 4.0

For decades, healthcare systems have operated in a reactive mode—intervening only after diseases manifest. This delayed approach drives up healthcare costs, leads to avoidable hospitalizations, and strains care infrastructures. The economic burden is profound, with chronic and late-diagnosed conditions consuming the majority of health expenditures. Beyond the financial impact, this model undermines quality of life, shortens healthspan, and exacerbates health disparities—leaving individuals and societies to bear the cumulative cost of missed opportunities for early, personalized intervention.

With rapidly increasing healthcare demands, aging populations, and the growing necessity for continuous disease monitoring, global healthcare systems now face unprecedented challenges due to a lack of efficient, accessible, and reliable diagnostic and therapeutic solutions [1]. In the United States, chronic and mental health conditions already account for approximately 90% of annual healthcare expenditures, a burden projected to intensify dramatically as populations age and multimorbidity rises [2]. In Europe, chronic diseases and non-communicable diseases represent around 80% of the overall disease burden and constitute the largest share of healthcare costs, with multimorbidity affecting more than 40% of people aged 65 and over [3].

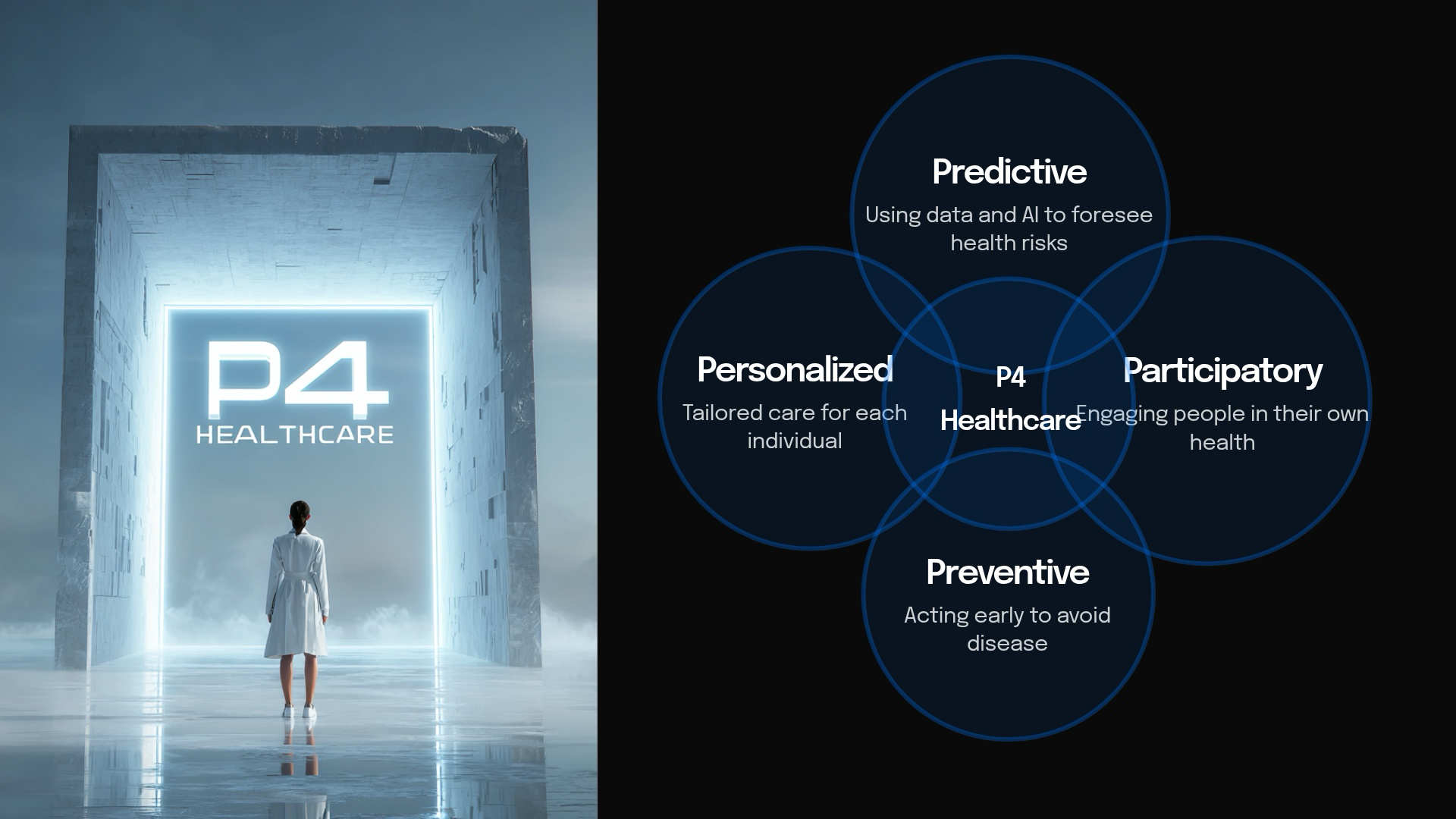

The Path to Precision Healthcare 4.0. To move beyond the limitations of reactive healthcare, we must embrace Precision Healthcare 4.0—the next generation of predictive, preventive, personalized, and participatory medicine. Central to this paradigm change is the responsible application of AI coupled with digital biomarker technologies. Together, they have the potential to transform the assessment, monitoring, and understanding of health conditions, enabling earlier and more accurate interventions.

What are Digital Biomarkers?

Digital biomarkers are objective, quantifiable measures of physiological or behavioral data collected through digital devices such as smartphones, wearables or sensors. They serve as indicators of biological processes, pathological processes, or responses to an exposure or intervention. This definition aligns with those established by both the European Medicines Agency (EMA) and the U.S. Food and Drug Administration (FDA).

Opportunities Afforded by Digital Biomarkers

Digital biomarkers offer powerful opportunities to transform healthcare by enabling more precise, timely, and personalized insights. The FDA-NIH BEST framework identifies six key categories of biomarkers [4].

- Susceptibility/Risk: Predict the potential for developing a medical condition in individuals without current symptoms.

- Predictive: Predict a patient’s likely response to a specific medical intervention.

- Diagnostic: Detect or confirm the presence of a disease or its subtypes.

- Prognostic: Identify the likelihood of disease progression, recurrence, or a clinical event in patients with an existing medical condition.

- Pharmacodynamic/Response: Measure the outcome of a therapeutic intervention and indicate whether a biological response has occurred.

- Monitoring: Assess disease status or response to treatment when measured repeatedly over time.

These six categories demonstrate how digital biomarkers enable the transition from reactive, episodic assessments to continuous, proactive, and personalized health monitoring [5].

Why Connected Speech Offers an Unprecedented Window into Health

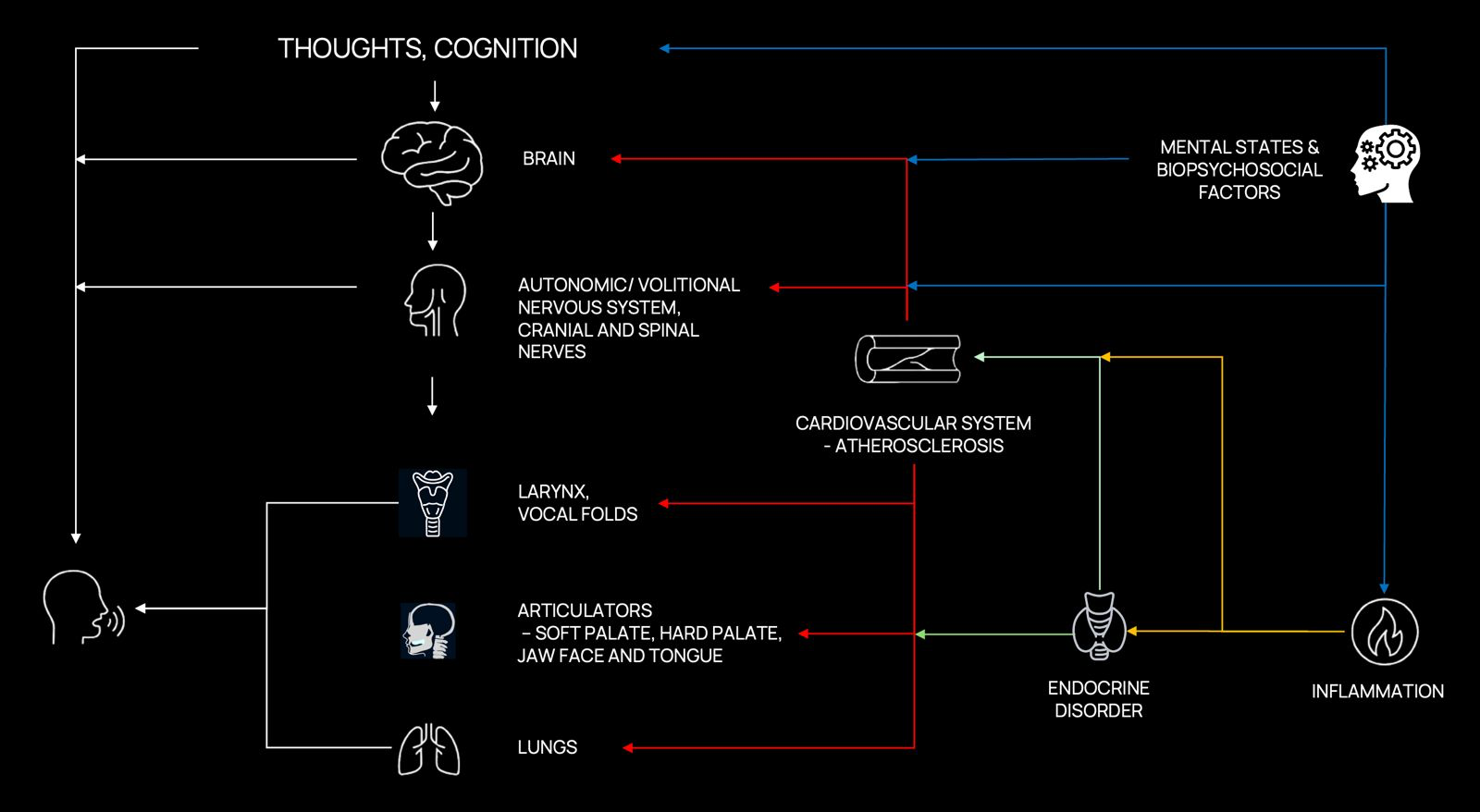

Quantifiable and objective metrics derived from speech and language stand out as one of the most promising types of digital biomarkers, as they offer a uniquely rich and multidimensional window into human health. Speech production is a complex behavior that requires the precise coordination of multiple neural systems involved in memory, attention, executive control, and motor planning. Subtle disruptions in these interconnected pathways —whether neurological, psychiatric, cardiovascular, metabolic, or systemic— manifest as measurable alterations in acoustic, prosodic, temporal, and linguistic characteristics [6]. While these early-stage alterations may go unnoticed by casual observers or even clinicians, they can be systematically captured and analyzed through automatic speech analytics tools harnessing speech recognition and downstream natural language processing to extract expertly engineered metrics.

Figure 1. Adapted from Sara JDS, Orbelo D, Maor E, Lerman LO, Lerman A. Novel Voice Biomarkers for the Remote Detection of Disease. Mayo Clinic Proceedings. 2023;98(9):1353–1375. The production of speech requires tightly coordinated orchestration among multiple physiological and cognitive systems, including the central nervous system (brain and cognition), peripheral nervous system (autonomic and cranial nerves), vocal apparatus (larynx, vocal folds), articulators (soft palate, jaw, face, tongue), and respiratory system (lungs). Disruptions or impairments within any of these interconnected domains manifest as measurable changes in acoustic and linguistic properties of speech. These measurable alterations serve as sensitive indicators of neurological, psychiatric, and systemic health conditions (cardiovascular diseases, metabolic disorders like diabetes, and immune/inflammatory disorders).

To realize this potential, ongoing efforts are building large, diverse, and longitudinally sampled datasets linking speech to structured clinical, behavioral, and neurobiological data — essential for understanding how such dimensions evolve over time within individuals, and for enabling personalized baselining and longitudinal tracking. AI models trained on such data are beginning to achieve clinically actionable performance across a spectrum of disorders, with demonstrated applications in remote monitoring, early detection, and treatment response prediction.

Speech biomarkers integrate naturally into advanced digital health ecosystems, particularly through personal sensing (also known as ubiquitous sensing), big data analytics, and mobile health (mHealth) platforms [7] [8]. In personal sensing, everyday devices continuously collect behavioral and physiological signals in natural environments, enabling speech data to be captured passively during routine interactions or actively through brief structured tasks. When combined with advanced big data analytics and machine learning algorithms, these longitudinal speech datasets reveal nuanced, individual-specific patterns that are often undetectable with traditional, episodic clinical evaluations.

Mobile health platforms further extend this potential by embedding speech analysis into smartphone applications, supporting seamless and low-burden data collection. Together, these technologies transform speech signals into actionable, real-time or longitudinal insights. This enables earlier intervention, more precise personalization of treatment plans, and reduced reliance on in-person clinical visits, thereby advancing a proactive and participatory model of healthcare.

Empirical Evidence: Speech Biomarkers Across Neurological, Psychiatric, Cardiovascular, Metabolic, and Immune/Inflammatory Conditions

Speech and language biomarkers have evolved from a promising hypothesis into a rapidly growing area of empirical research across multiple disease domains. Evidence from longitudinal cohorts, clinically characterized populations, and biomarker-linked investigations suggests that subtle alterations in speech and language can precede overt clinical symptoms by years, providing objective, non-invasive indicators of underlying pathology.

Neurological Conditions

The most compelling evidence to date comes from disorders affecting the brain, where speech offers a particularly sensitive window into changes in cognition, language, affect, and motor control. Here we focus on two prevalent neurodegenerative diseases — Alzheimer’s disease and Parkinson’s disease — as clear demonstrations of how speech and language biomarkers can capture clinically meaningful functional decline long before conventional diagnosis.

Alzheimer’s disease is still too often approached as a condition detected late in life. Yet measurable functional decline begins much earlier — potentially decades before a diagnosis is made. By the time memory complaints reach clinical attention, substantial neuronal loss and network-level disruption may already be underway. Even with major advances in amyloid, tau, PET, and blood-based biomarkers, a critical gap persists: no scalable, real-world approach yet exists for detecting and continuously tracking functional brain change at the population level.

Speech and language biomarkers address this gap directly [9] [10] [11]. The most striking demonstration of their long-term sensitivity comes from the Nun Study — a landmark longitudinal investigation in which 678 participants were followed for more than three decades. Early-life linguistic complexity in autobiographical writing predicted late-life cognitive status and neuropathologically confirmed Alzheimer’s disease more than 50 years later [12]. The power of this finding rests not only on its extraordinary temporal span, but on the rigor of its design: the relative homogeneity of education, living environment, and healthcare access substantially reduced lifestyle confounding, while the longitudinal depth provided an unusually clear view of how early language patterns relate to late-life neurodegenerative outcomes.

Subsequent work has reinforced this signal across independent cohorts and methodological paradigms. In a large population-based study, linguistic biomarkers predicted Alzheimer’s onset an average of 7–8 years before clinical diagnosis in cognitively normal individuals [13] — underscoring that speech does not merely reflect late-stage impairment, but preserves quantifiable traces of incipient brain dysfunction long before conventional clinical recognition.

This picture is further strengthened by recent biomarker-grounded evidence. In our own research, spontaneous speech distinguished CSF-validated Alzheimer’s disease from cognitively healthy controls with strong cross-validated performance, using a compact, interpretable set of linguistic features derived from connected speech [14]. By anchoring speech analysis to biologically verified disease status rather than clinical diagnosis alone, this work provides particularly compelling evidence that connected speech constitutes a meaningful functional complement to established molecular biomarkers — not a substitute, but a necessary addition.

Looking ahead, the most promising frontier lies in integrating AI-driven speech analysis with molecular and imaging biomarkers to model brain health trajectories comprehensively — linking pathology, neural system disruption, and behavioral change across time. Speech is emerging not merely as an accessible digital signal, but as a foundational measurement layer for functional assessment in Alzheimer’s prevention, early detection, and precision care.

Parkinson’s disease is a highly heterogeneous neurodegenerative disorder presenting with diverse symptoms, underlying causes, and individual patient experiences [15]. While PD research has historically focused on its motor manifestations, it is now increasingly evident that PD is not simply a movement disorder but a complex neuropsychiatric condition [16]. Neuropsychiatric symptoms — including mood changes, depression, anxiety, apathy, impulse control disorders, and cognitive impairment — carry an equal or even greater impact on patients’ quality of life than motor symptoms alone [17]. This broader understanding is now supported at the mechanistic level by a landmark Nature study reframing PD as a somato-cognitive action network (SCAN) disorder — demonstrating, across 863 participants and 11 independent cohorts, that all major PD therapeutic targets are selectively connected to the SCAN, a network coordinating whole-body action, arousal, and cognition, rather than to effector-specific motor circuits [18]. This finding provides a compelling neural architecture for the full neuropsychiatric complexity of PD — and underscores why its clinical presentation extends so far beyond movement.

Yet despite this complexity, clinical diagnosis still relies primarily on motor dysfunction: bradykinesia together with rigidity and/or resting tremor. By the time these defining signs become apparent, an estimated 50–60% of dopaminergic neurons in the substantia nigra have already been lost, alongside up to 70% depletion of striatal dopamine [19] — representing an already late stage for interventions aimed at slowing or modifying disease progression. PD is now the fastest-growing neurological disease worldwide, characterized by intracellular α-synuclein pathology and progressive dopaminergic neurodegeneration [20], yet the diagnostic framework has not kept pace with the biological reality of the disease. Emerging evidence demonstrates that subtle speech and language alterations can precede motor dysfunction and clinical diagnosis by up to a decade [21] — creating a large, and still critically underused, window for earlier functional detection.

Speech in PD captures dysfunction across multiple interacting systems simultaneously: motor timing and sequencing, sensorimotor integration, respiratory-phonatory control, and higher-order cognitive-linguistic processing. This multidimensional sensitivity is what makes speech particularly powerful — reflecting the full neuropsychiatric complexity of the disease rather than its motor surface alone. What Parkinson’s care is still missing is precise, objective, and frequent measurement of brain function — not only at diagnosis, but continuously over the disease course. Clinical rating scales, administered episodically in controlled settings, cannot capture day-to-day fluctuations, medication effects, or the subtle longitudinal trajectories that define individual disease progression. Digital biomarkers derived from speech are uniquely positioned to deliver exactly this: continuous longitudinal measurement that is non-invasive, scalable, and sensitive to functional change at a resolution that conventional clinical tools cannot approach.

Psychiatric Conditions

In contrast to neurodegenerative disorders, psychiatry still lacks clinically established blood-based biomarkers or other objective diagnostic measures that are sufficiently validated for routine practice. Diagnosis therefore continues to rely predominantly on symptom reports, clinician observation, and categorical criteria, despite growing recognition that these approaches are often insensitive to subtle early-stage change and poorly suited to longitudinal monitoring [22] [23] [24].

The consequences of this gap are substantial. In bipolar disorder, for example, diagnosis and optimal treatment are often delayed by approximately nine years after an initial depressive episode, illustrating the broader difficulty of identifying psychiatric illness early and accurately in the absence of objective markers [25] [26].

This is precisely why speech and language biomarkers are of particular interest in psychiatry. Unlike conventional assessments, speech provides a continuous, non-invasive, and behaviorally rich measurement channel shaped by affective state, psychomotor function, cognitive organization, and pragmatic communication. It thus offers access to core dimensions of psychiatric illness that remain difficult to quantify reliably with standard instruments alone.

Major depressive disorder is one of the leading causes of disability worldwide, yet it remains systematically under-detected and poorly monitored between clinical encounters. The disorder is characterized by pervasive changes in affect, psychomotor function, cognitive processing, and social engagement — all of which leave measurable traces in the way individuals speak. This makes speech a uniquely sensitive and ecologically valid signal for tracking depressive states continuously and non-invasively [27] [28] — something that cannot be achieved through conventional self-report questionnaires and episodic clinical interviews. The clinical consequences of this monitoring gap are well-documented. Without objective, continuous measurement, depressive episodes are frequently missed in their early stages, treatment response goes undetected, and relapse occurs without timely clinical recognition [29].

Schizophrenia imposes a profound and multidimensional burden on language and communication. Disturbances in pragmatic competence, semantic coherence, and emotional expressivity are among the most characteristic and clinically consequential features of the disorder — not peripheral symptoms, but core expressions of the underlying disruption to semantic networks, social-cognitive processing, and executive function [30] [31] [32]. These disturbances have direct consequences for social functioning, occupational capacity, and quality of life, and they often emerge subtly in the prodromal period — years before psychosis is formally diagnosed [33] [34].

Cardiovascular, Metabolic, and Immune/Inflammatory Conditions

Beyond the central nervous system, speech biomarkers are beginning to reveal clinically meaningful signals of systemic disease. The mechanistic rationale is clear: voice production depends on the tightly coordinated interplay of cardiopulmonary function, autonomic regulation, laryngeal neuromuscular control, endocrine balance, and immune integrity. Disruptions to any of these systems — through cardiovascular disease, metabolic dysregulation, or systemic inflammation — can perturb phonation in reproducible, measurable ways. The association between vocal biomarkers and systemic disease reflects multiple converging pathways: autonomic dysregulation of the vagus nerve and its intimate relationship with cardiac function, inflammatory effects on laryngeal tissue integrity, and neuromuscular alterations driven by endocrine and immune dysfunction — all of which leave detectable traces in the acoustic and temporal properties of voice [6].

In cardiovascular disease, the evidence is most developed. Heart failure affects over 64 million individuals globally and results in more than one million hospital admissions annually in both the US and Europe — yet timely detection of decompensation remains one of the most persistent unsolved problems in chronic disease management. A recent systematic review of studies in coronary artery disease, pulmonary hypertension, and heart failure found that specific vocal profiles are associated with increased risk of subsequent hospitalisation, mortality, and incident cardiac events. These findings indicate that voice analysis may support risk stratification and remote monitoring, although further methodological standardisation is required before routine clinical deployment [35].

Similar associations have been reported for metabolic and inflammatory conditions. For example, machine-learning models integrating voice features with clinical biomarkers have demonstrated robust predictive performance for type 2 diabetes mellitus [36].

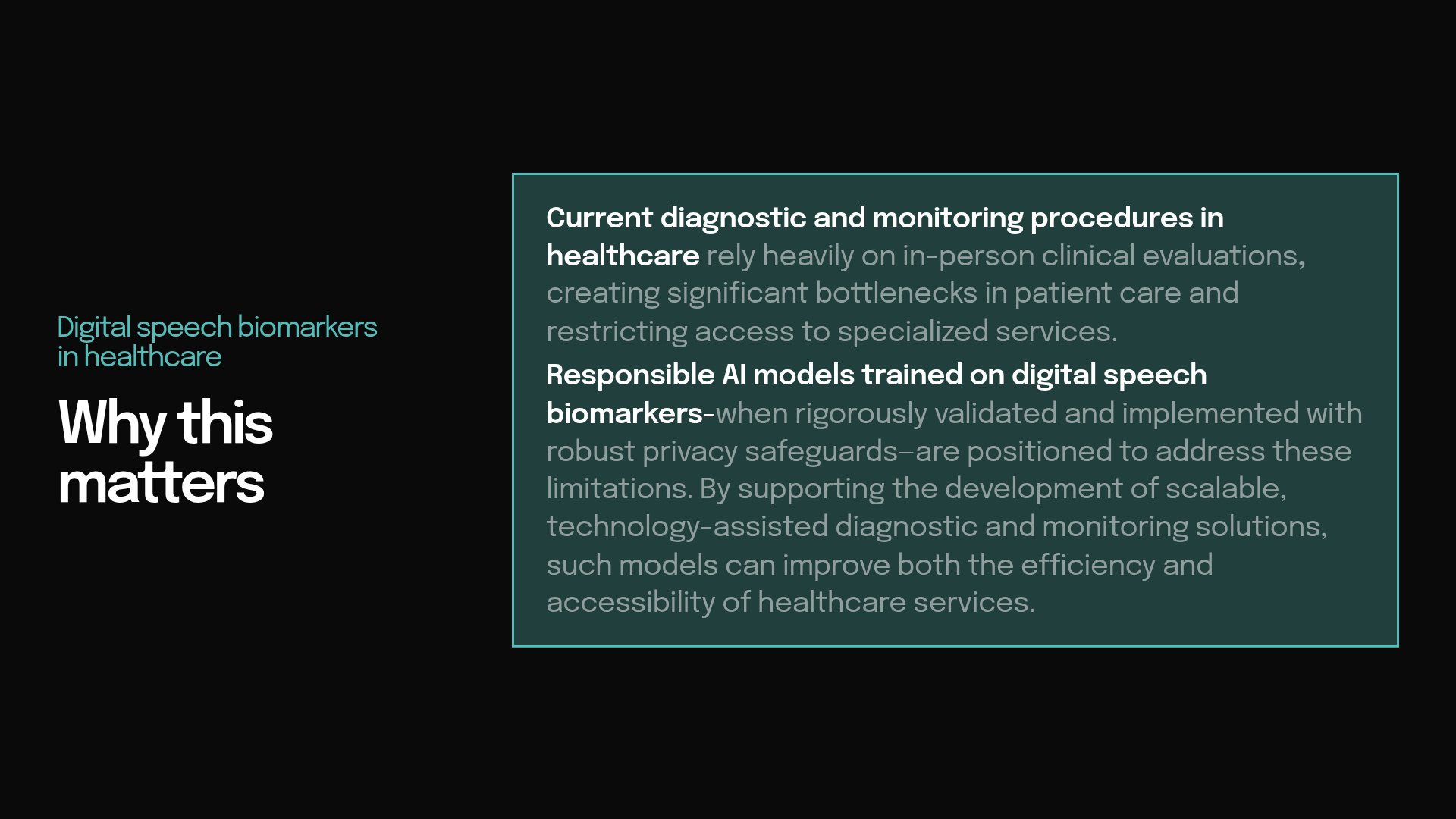

Responsible AI: From Technical Promise to Clinical Trust

To fully realize the promise of digital speech biomarkers in Precision Healthcare 4.0, their development and deployment must be guided by rigorous Responsible AI principles. While these technologies offer unprecedented opportunities for continuous, non-invasive monitoring across the lifespan, they also introduce unique risks in high-stakes healthcare settings — risks that cannot be ignored if the goal is equitable, safe, and clinically meaningful impact [37].

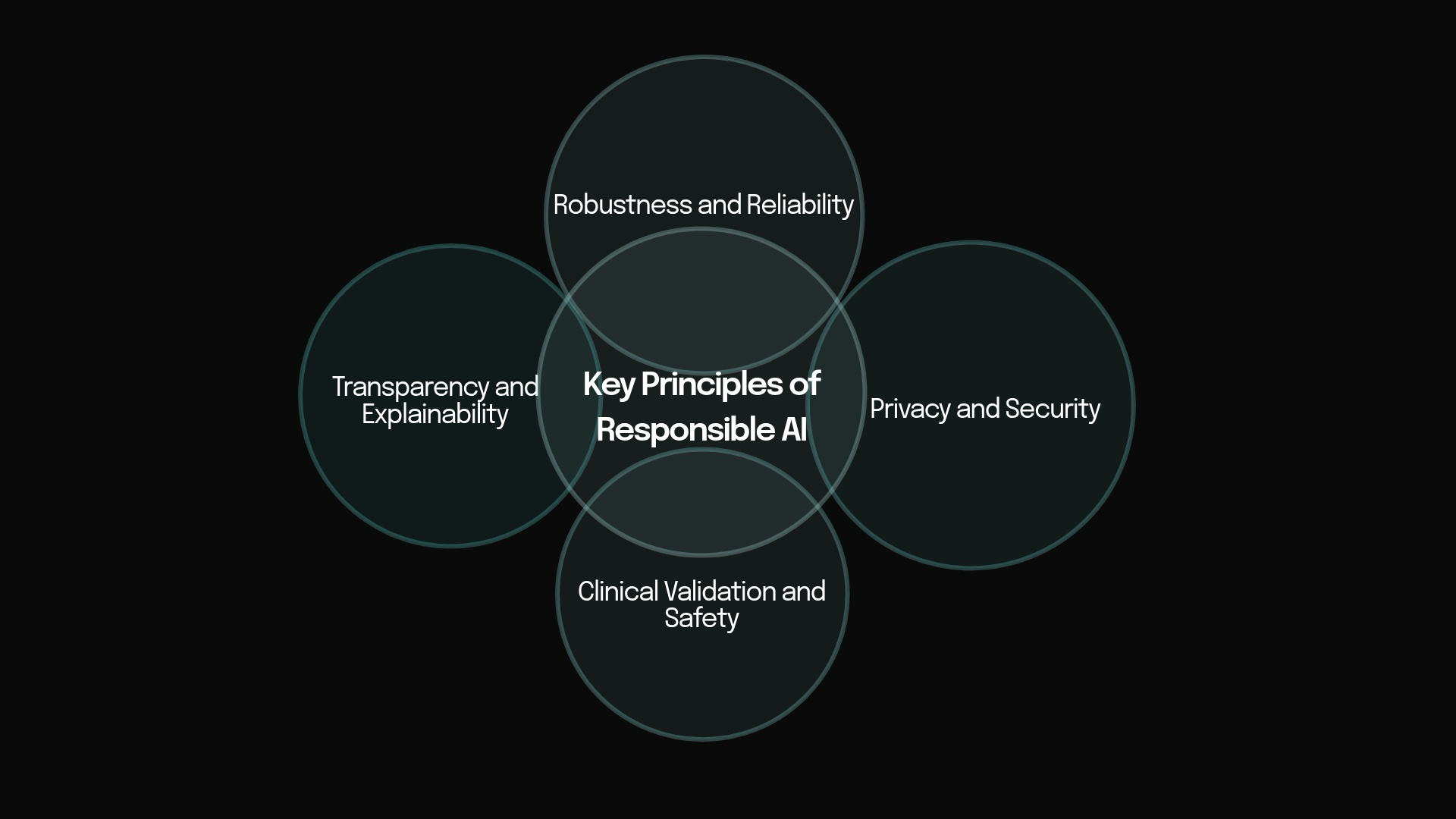

Key Principles of Responsible AI.

Key Principles of Responsible AI

- Privacy and Security Voice recordings are uniquely identifiable biometric data that can reveal health status, identity, and personal context. Privacy-by-design techniques — such as on-device processing, federated learning, and differential privacy — are essential to protect patient information while still enabling powerful analysis.

- Transparency and Explainability Clinicians and patients must understand why a model reached a particular conclusion. Highlighting the specific acoustic or linguistic features driving a prediction builds trust and supports shared clinical decision-making.

- Robustness and Reliability Speech biomarkers must perform consistently across diverse recording devices, acoustic environments, languages, accents, and real-world conditions. Rigorous testing for generalizability ensures models do not fail when moved from controlled studies into everyday use.

- Clinical Validation and Safety Speech-based models require prospective, multi-center clinical studies to demonstrate safety, efficacy, and real-world utility, followed by continuous post-market surveillance.

Together, these four principles ensure that speech biomarkers evolve from promising research tools into trustworthy and scalable components of Precision Healthcare 4.0.

Why Do Many Existing AI Solutions Fall Short?

Large language models (LLMs), as a class of foundation models, have demonstrated remarkable success across diverse real-world application domains — from information retrieval and document analysis to coding assistance and conversational interfaces. Yet the strengths that make these systems powerful in general-purpose language tasks do not translate to high-stakes industrial sectors like healthcare. In clinical settings, these models not only fail to meet the stricter requirements of privacy preservation, interpretability, robustness, and reliable deployment — they frequently underperform compared to expert-engineered supervised models that are purpose-built for the domain.

This gap is most pronounced in speech-based healthcare applications. Commercial models typically rely on cloud-based APIs, requiring sensitive patient data to be transmitted to third-party infrastructures. For speech data, this is particularly problematic: voice recordings are not simply another modality, but uniquely identifiable biometric data that can reveal health status, identity, and personal context. Even when formal compliance frameworks such as HIPAA or GDPR are in place, this mode of deployment introduces serious challenges for privacy, governance, and institutional control over clinically sensitive information.

Open-weight models do not resolve these concerns. Although they can be deployed locally, they remain constrained by limited domain specificity, weak accountability structures, and insufficient interpretability. More fundamentally, they are optimized for broad linguistic competence rather than for biologically grounded, clinically validated measurement — creating a fundamental mismatch between the statistical objectives of large foundation models and the evidentiary standards required in medicine.

A further obstacle is computational feasibility. Large-scale models are poorly suited to edge deployment and low-latency clinical environments where privacy-preserving, real-time inference is essential. This is particularly consequential for continuous monitoring, assistive systems, and healthcare robotics, where clinical value depends not only on what a model predicts, but on whether it can operate securely, efficiently, and reliably in real-world care settings.

Equally critical is the problem of generalizability. Models trained on narrow, curated, or demographically imbalanced datasets often perform well under controlled conditions yet fail when exposed to the heterogeneity of real clinical populations. Many current approaches compound this by relying on high-dimensional representations without grounding in speech science or clinical theory, capturing superficial statistical patterns rather than clinically meaningful signals. In healthcare, such failures are not merely technical shortcomings — they compromise safety, undermine trust, and risk amplifying existing disparities in care. These risks become still more serious when model behavior remains opaque, making errors difficult to interpret, validate, or correct after deployment.

Exaia’s Competitive Advantage: Responsible AI Without Compromising Performance

At Exaia, we take a fundamentally different approach. We do not treat healthcare AI as a scale problem alone; we treat it as a scientific and clinical measurement problem. Our models are built from the outset to meet the demands of high-stakes healthcare: privacy preservation, interpretability, robustness under real-world variability, computational efficiency, and clinically meaningful validation.

This approach begins from a simple premise: in speech-based healthcare, the most reliable models are not those that maximize representational scale, but those that maximize clinical relevance. We therefore prioritize expert-engineered acoustic and linguistic biomarkers grounded in speech science, neuroscience, psychiatry, and clinical research. This yields models that remain transparent at the level of individual features, biologically interpretable, and sufficiently data-efficient for the realities of clinical datasets, which are often small, heterogeneous, and expensive to collect.

A central component of our strategy is the systematic evaluation of the accuracy–transparency trade-off. We have conducted extensive experiments to determine, across clinical tasks, when fully interpretable models are sufficient and when higher-dimensional representations provide a justified performance gain. This does not mean excluding transformer-based embeddings categorically. Where such representations measurably improve predictive performance, we incorporate them selectively within hybrid architectures. We benchmark them against strong interpretable baselines, integrate them only when they deliver clear incremental value, and subject them to explicit explainability analyses to preserve clinical accountability. In this way, high-dimensional features serve the model rather than define it.

This strategy directly addresses the limitations of generic LLM-based approaches. By favoring domain-specific, theory-informed feature spaces, we reduce dependence on cloud-scale infrastructure and enable privacy-preserving, computationally efficient deployment in edge and low-latency environments. By anchoring model behavior in expert-engineered biomarkers, we improve interpretability and facilitate validation, troubleshooting, and regulatory scrutiny. By introducing complexity only when it yields robust and clinically meaningful gains, we ensure that model design remains aligned with the evidentiary standards of medicine. And by grounding feature construction in multidisciplinary scientific knowledge rather than purely statistical abstraction, we improve generalizability across populations, devices, acoustic environments, languages, and clinical contexts.

Our approach is equally defined by privacy-by-design. All data are protected through end-to-end encryption using TLS and mTLS protocols. Sensitive data, including audio recordings, are de-identified on the client device using an x-vector audio de-identification algorithm before secure transmission. Demographic information is encrypted and stored separately from audio files as an additional safeguard. Role-based access control (RBAC) and multi-factor authentication (MFA) further ensure that only authorized individuals can access sensitive data. In clinical speech AI, privacy cannot be retrofitted after deployment; it must be embedded into the system architecture from the outset.

We treat generalizability as a core performance criterion, not as a downstream aspiration. Our models are trained on multidisciplinary datasets and subjected to rigorous out-of-sample validation across diverse populations, recording devices, acoustic conditions, languages, dialects, and clinical settings. This is especially important in healthcare, where failure under distributional shift is not merely a technical weakness but a direct threat to safety, fairness, and clinical trust.

This discipline is what enables clinical translation. By standardizing elicitation protocols, engineering features with expert input, and validating them rigorously, we develop biomarkers that are reproducible, clinically meaningful, and robust across populations, languages, and recording conditions. Instead of relying on ad hoc feature selection or opportunistic correlations, we build models on signals that remain interpretable, generalizable, and anchored in real-world pathology.

References

[1] Khan HT, Addo KM, Findlay H. Public health challenges and responses to the growing ageing populations. Public Health Challenges. 2024;3(3):e213. Back to text

[2] Centers for Disease Control and Prevention. Fast facts: Health and economic costs of chronic conditions [Internet]. 2025 [cited 2026 Apr 13]. Available from: https://www.cdc.gov/chronic-disease/data-research/facts-stats/index.html Back to text

[3] European Commission. Non-communicable diseases: overview [Internet]. [cited 2026 Apr 13]. Available from: https://health.ec.europa.eu/non-communicable-diseases/overview_en Back to text

[4] FDA-NIH Biomarker Working Group. BEST (Biomarkers, EndpointS, and other Tools) Resource [Internet]. Silver Spring (MD): U.S. Food and Drug Administration; 2016 [cited 2026 Apr 13]. Available from: https://www.ncbi.nlm.nih.gov/books/NBK326791 Back to text

[5] Vasudevan S, Saha A, Tarver ME, Patel B. Digital biomarkers: convergence of digital health technologies and biomarkers. NPJ Digit Med. 2022;5(1):36. Back to text

[6] Sara JDS, Orbelo D, Maor E, Lerman LO, Lerman A. Guess what we can hear — novel voice biomarkers for the remote detection of disease. Mayo Clin Proc. 2023;98(9):1353–75. Back to text

[7] Mohr DC, Zhang M, Schueller SM. Personal sensing: understanding mental health using ubiquitous sensors and machine learning. Annu Rev Clin Psychol. 2017;13:23–47. Back to text

[8] Akre S, et al. Advancing digital sensing in mental health research. NPJ Digit Med. 2024;7(1):362. Back to text

[9] Fraser KC, Meltzer JA, Rudzicz F. Linguistic features identify Alzheimer’s disease in narrative speech. J Alzheimers Dis. 2016;49(2):407–22. Back to text

[10] Boschi V, Catricala E, Consonni M, Chesi C, Moro A, Cappa SF. Connected speech in neurodegenerative language disorders: a review. Front Psychol. 2017;8:269. Back to text

[11] Mueller KD, Hermann B, Mecollari J, Turkstra LS. Connected speech and language in mild cognitive impairment and Alzheimer’s disease: a review of picture description tasks. J Clin Exp Neuropsychol. 2018;40(9):917–39. Back to text

[12] Clarke KM, Etemadmoghadam S, Danner B, et al. The Nun Study: insights from 30 years of aging and dementia research. Alzheimers Dement. 2025;21:e14626. Back to text

[13] Eyigoz E, Mathur S, Santamaria M, Cecchi G, Naylor M. Linguistic markers predict onset of Alzheimer’s disease. EClinicalMedicine. 2020;28:100583. Back to text

[14] Wiechmann D, Kerz E, Albrecht M, Qiao Y, Pinho J, Reetz K, et al. Detecting CSF-validated Alzheimer’s disease from spontaneous speech in German: an interpretable end-to-end machine-learning framework. Front Neurol. 2026;17:1780783. Back to text

[15] Bloem BR, Okun MS, Klein C. Parkinson’s disease. Lancet. 2021;397(10291):2284–303. Back to text

[16] Weintraub D, Burn DJ. Parkinson’s disease: the quintessential neuropsychiatric disorder. Mov Disord. 2011;26(6):1022–31. Back to text

[17] Santos García D, et al. Non-motor symptoms burden, mood, and gait problems are the most significant factors contributing to a poor quality of life in non-demented Parkinson’s disease patients: results from the COPPADIS Study Cohort. Parkinsonism Relat Disord. 2019;66:151–7. Back to text

[18] Ren J, Zhang W, Dahmani L, et al. Parkinson’s disease as a somato-cognitive action network disorder. Nature. 2026. https://doi.org/10.1038/s41586-025-10059-1 Back to text

[19] Cheng HC, Ulane CM, Burke RE. Clinical progression in Parkinson disease and the neurobiology of axons. Ann Neurol. 2010;67(6):715–25. Back to text

[20] Poewe W, Seppi K, Tanner CM, et al. Parkinson disease. Nat Rev Dis Primers. 2017;3:17013. Back to text

[21] Cao F, Vogel AP, Gharahkhani P, et al. Speech and language biomarkers for Parkinson’s disease prediction, early diagnosis and progression. NPJ Parkinsons Dis. 2025;11:57. Back to text

[22] Tomasik J, Zaki JK, Bahn S. New approaches to enhance the diagnosis of psychiatric disorders. Brain Med. 2026;1(aop):1–14. Back to text

[23] Kas MJH, Penninx BWJH, Sommer IEC, et al. Towards a biology-informed framework for mental disorders. Mol Psychiatry. 2025. https://doi.org/10.1038/s41380-025-03070-5 Back to text

[24] Dukart J, et al. The many-to-many problem of endophenotypes in psychiatry. Mol Psychiatry. 2026. https://doi.org/10.1038/s41380-026-03473-y Back to text

[25] Nierenberg AA, Agustini B, Köhler-Forsberg O, et al. Diagnosis and treatment of bipolar disorder: a review. JAMA. 2023;330(14):1370–80. Back to text

[26] Keramatian K, et al. Duration of untreated or undiagnosed bipolar disorder and clinical characteristics and outcomes: systematic review and meta-analysis. Br J Psychiatry. 2024. https://doi.org/10.1192/bjp.2024.47 Back to text

[27] Kim Y, et al. Deep neural network-based analysis of voice biomarkers for monitoring treatment response in adolescent major depressive disorder. Commun Med. 2026. https://doi.org/10.1038/s43856-025-01326-3 Back to text

[28] Matcham F, Barattieri di San Pietro C, Bulgari V, De Girolamo G, Dobson R, Eriksson H, et al. Remote assessment of disease and relapse in major depressive disorder (RADAR-MDD): a multi-centre prospective cohort study protocol. BMC Psychiatry. 2019;19(1):72. Back to text

[29] Fekadu A, Demissie M, Birhane R, Medhin G, Bitew T, Hailemariam M, et al. Under-detection of depression in primary care settings in low and middle-income countries: a systematic review and meta-analysis. Syst Rev. 2022;11(1):21. Back to text

[30] Bambini V, Arcara G, Bechi M, Buonocore M, Cavallaro R, Bosia M. The communicative impairment as a core feature of schizophrenia: frequency of pragmatic deficit, cognitive substrates, and relation with quality of life. Compr Psychiatry. 2016;71:106–20. Back to text

[31] Tang SX, Kriz R, Cho S, et al. Natural language processing methods are sensitive to sub-clinical linguistic differences in schizophrenia spectrum disorders. Schizophrenia. 2021;7:25. Back to text

[32] Rajher R, Marinković M, Prelog PR, Žabkar J. Automated speech-fluency explanations for schizophrenia diagnosis. Sci Rep. 2025. https://doi.org/10.1038/s41598-025-33129-w Back to text

[33] Świtaj P, Anczewska M, Chrostek A, Sabariego C, Cieza A, Bickenbach J, et al. Disability and schizophrenia: a systematic review of experienced psychosocial difficulties. BMC Psychiatry. 2012;12(1):193. Back to text

[34] Hoseinipalangi Z, Golmohammadi Z, Rafiei S, Pashazadeh Kan F, Hosseinifard H, Rezaei S, et al. Global health-related quality of life in schizophrenia: systematic review and meta-analysis. BMJ Support Palliat Care. 2022;12(2):123–31. Back to text

[35] Bauser M, Kraus F, Koehler F, Rak K, Pryss R, Weiß C, et al. Voice assessment and vocal biomarkers in heart failure: a systematic review. Circ Heart Fail. 2025;18(8):e012303. https://doi.org/10.1161/CIRCHEARTFAILURE.124.012303 Back to text

[36] Guo J, Peng W, Hu S, Lu D, Chen S. A novel machine learning-driven voice and clinical biomarkers framework for robust prediction of type 2 diabetes mellitus. J Voice. 2025. https://doi.org/10.1016/j.jvoice.2025.04.001 Back to text

[37] Berisha V, Liss JM. Responsible development of clinical speech AI: bridging the gap between clinical research and technology. NPJ Digit Med. 2024;7:208. https://doi.org/10.1038/s41746-024-01199-1 Back to text